We should always expect the Spanish Inquisition: which is to say, leaders should use the lessons of behavioural science to prepare for all outcomes – like Covid, or the fall of Kabul

Why are we surprised that we are surprised by events? Should, or could our leaders have done better?

This September marks the 51st anniversary of the first broadcast of Monty Python’s legendary Spanish Inquisition sketch. The set up for the sketch is laughably mundane-historical: Graham Chapman and Carol Cleveland are playing out a scene between a Jarrow mill worker and the mill owner’s wife. He (Chapman) tries to warn her (Cleveland) that “one of the cross beams has gone askew on the treadle”.

Frustrated at being unable to make himself understood and her baffled questions, Chapman splutters: “I didn’t expect some kind of Spanish Inquisition”.

In burst the chaotic and easily confused Cardinal Ximenez and his sidekicks, Cardinals Biggles and Fang (respectively, Michael Palin, Terry Jones and Terry Gilliam). “Our greatest weapon is surprise!” snarls Ximenez – before tripping into the now familiar routine, in which he adds more and more weapons to his boastful taunts, trying again and again to establish the definitive list of what makes the Inquisition successful. But “surprise” remains top of his ever-lengthening inventory.

Meanwhile: February 2022 will mark the 60th anniversary of the dark day on which Dick Rowe, head of A&R (artists and repertoire) at Decca records, (in)famously turned down the Beatles. “Guitar groups are on their way out”, was the excuse he gave the band’s manager, Brian Epstein – despite the Fab Four’s memorable performance that day, which you can still hear in various odd places online.

Who, to be fair, could have predicted their extraordinary subsequent success, the extent to which they changed global culture, and their abiding popularity in the 21st Century? Well, not Rowe. Not that day.

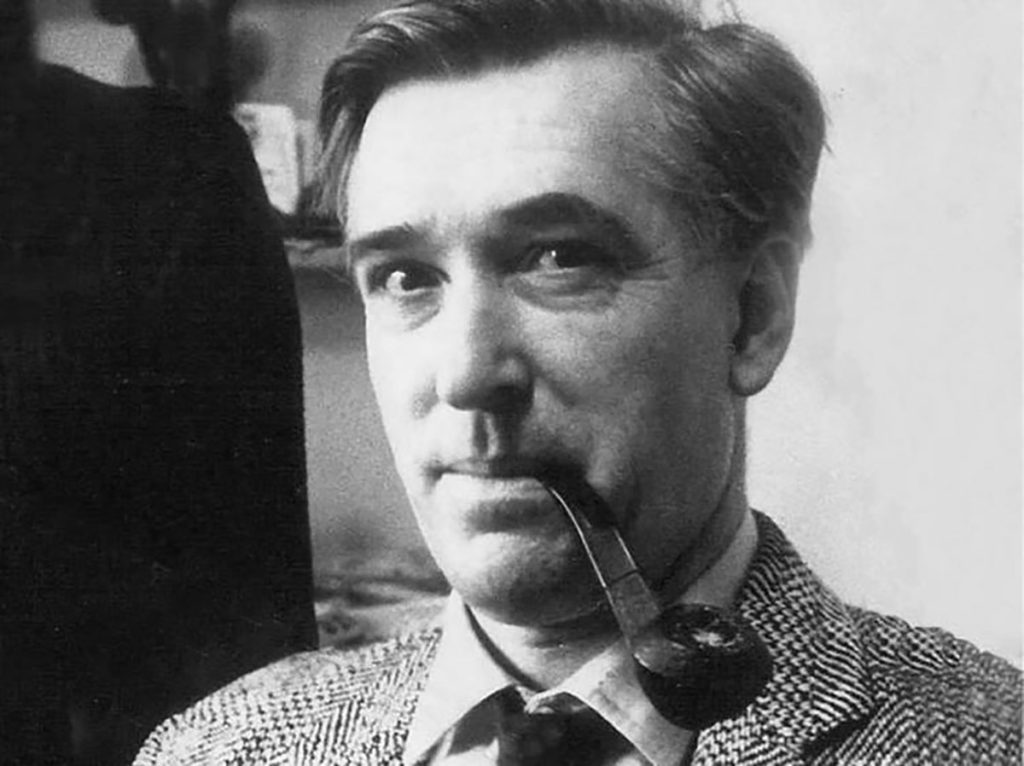

And finally: January 2022 marks the 73rd anniversary of the premiere of Zulu, a movie famous for a number of things. It is a surprisingly accurate historical portrayal of the 1879 Battle of Rorke’s Drift; a sympathetic and largely positive view of the indigenous warriors; a bizarre rousing chorus of ‘Men Of Harlech’ from the beleaguered redcoats in the closing battle scene which was complete fiction; and Michael Caine’s (mostly) unmannered first screen appearance.

Yet the pivotal scene remains one of perplexed surprise. Reporting the news from the scouting parties to his superiors, the Colour Sergeant (a straight-faced Nigel Green) announces they’ve spied “Zulus to the southwest…thousands of ‘em!”. Both officers (played, respectively, by Caine and Stanley Baker) stand quietly stunned for a few moments – even though this is precisely why they marched in the first place to defend the stores depot and hospital on the border with Zululand.

We all find ourselves in situations in which we are surprised by the unfolding of events, by wildcards and, more mundanely, by the simply unexpected. It is a truism that things – quite often – really don’t turn out the way we expect.

For most of us, and for most of the time, it is not too difficult to hold our hands up and admit: “Boy, I didn’t see that coming”. Somehow, we find a way to muddle through, to improvise some sort of adaptive solution or to call in a helping hand (which is why – for instance – roadside support is a good thing to pay for, as is household insurance). As the late Donald Rumsfeld said it first and best: Stuff happens. As private citizens, we hedge as best we can against the unforeseen.

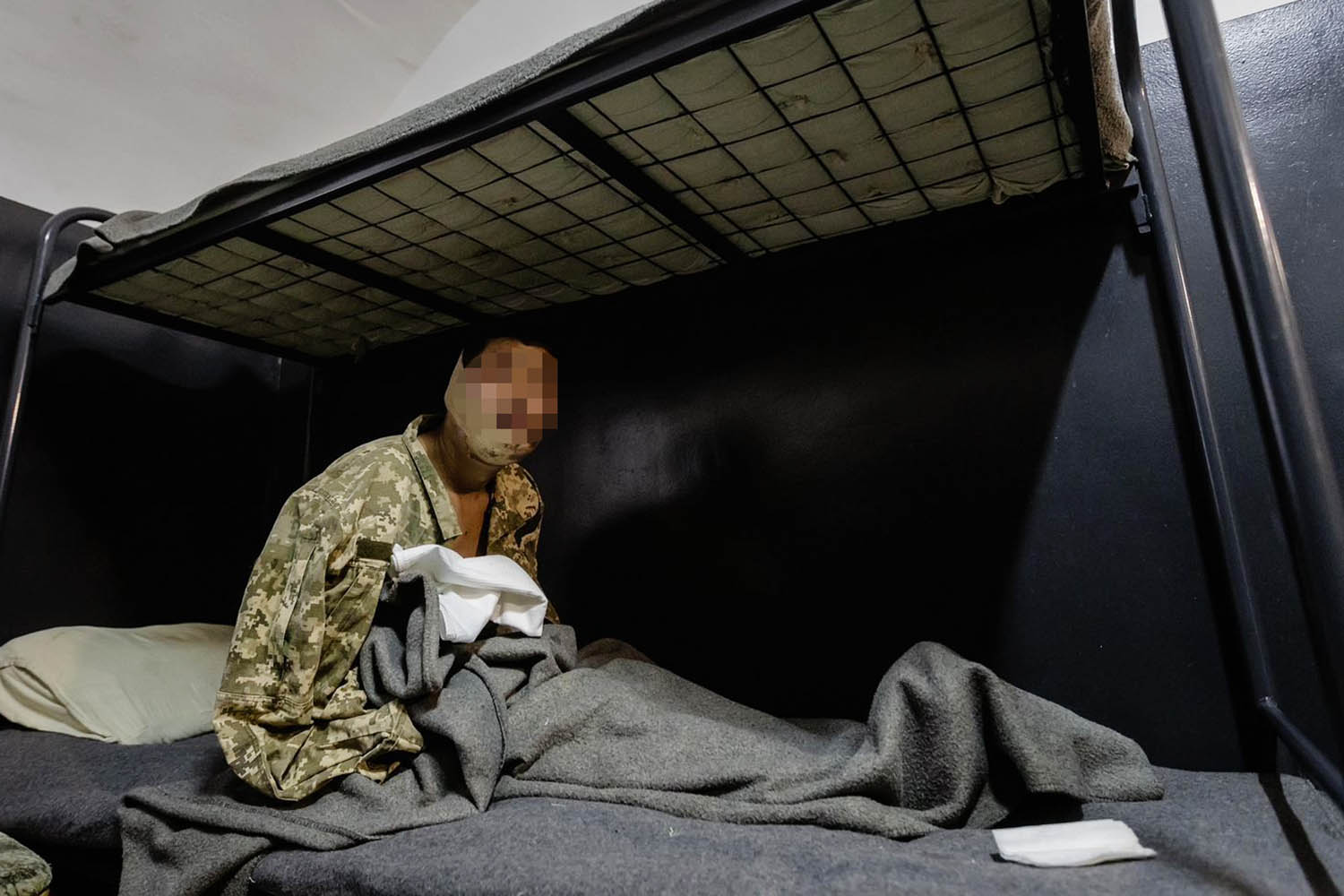

In the light of all this uncertainty – a feature of the universe that is reflected at subatomic level in the unpredictability of quantum physics – is there a measure of churlishness to all the criticism to which the UK Government has been subjected over the speed with which Kabul fell to the Taliban? Who, really, could have predicted that the Afghan state and army would collapse as quickly as they did? Who could have foreseen the bedlam at Kabul airport or the urgency of the need to evacuate many thousands of UK citizens and Afghans who had helped the British contingent?

This is where the cool analysis of behavioural science is of use and can cut through the political noise and recrimination. Try to dial down your fixation with what you would like to see happen, what you’d prefer to be the case, what would be easier or more profitable for you. Try to reorder the facts and tell alternative stories about where we are and how we got here.

Let’s reduce the picture to its essentials: for a start, it was (or should have been) absolutely clear that the Afghan state remained utterly dependent upon at least a minimum level of military support from the West. Nation-building takes many decades, not two. It cannot proceed without a basic measure of armed support, if only as an insurance policy.

Afghanistan has a complex, dynamic and (to westerners) arcane political culture and history which made Ashraf Ghani’s regime inherently unstable. Yes, the Taliban were overthrown by Operation Enduring Freedom in December 2001. But they never really went away – not fully – and, for 20 years, even as their fortunes ebbed and flowed, remained well-funded and resourced.

This was always a house of cards, an extraordinarily fragile structure, and one that was tremendously vulnerable to the smallest disruption – let alone a seismic change such as the hasty withdrawal of the remaining US military forces.

As was clear from Dominic Raab’s stumbling performance at the Foreign Affairs Select Committee this week, there was a spectrum of intelligence assessments put before UK ministers – ranging from warnings that collapse was imminent to more sanguine analyses, encouraging the view that the American retreat would not be rushed and, crucially, that the Afghan state would not fall in this geopolitical game of Jenga.

So: could the foreign secretary, the prime minister and the government as a whole have anticipated what has happened? Could and should they have planned for this dramatic eventuality?

The answers are: “Yes” and “Yes”. Modern leadership takes – or should take – account not only of what is likely to happen, not just what it wants or expects to happen, but of High Impact Low Probability (HILP) events: pandemics, terrorist attacks, cyberwarfare, climate disasters. Increasingly, the sphere of the predictable will be handled by machines. What human beings are good at is imagining scenarios and gaming potential outcomes.

Whether managing a family, a business, a city or a country, the 21st-century leader should regard this as a core skill, rather than a useful additional competence and prepare accordingly. This is not “Black Swan” territory, predicting the unpredictable: it’s basic planning. Nor, as we’ll see, should it necessitate months and months of work by super smart mavericks: it’s simply a different way of doing things that just about any leader or leadership team can make part of their modus operandi.

There is a common-sense case for citizens expecting such abilities of those who lead them. The scale of the risks with which those in power deal daily makes this reasonable: it is part of the job description for those who aspire to run the country. We may forgive ourselves for not taking the washing in before rain or bumping the car into a lamppost due to someone else’s human error, while – quite consistently – taking a more elevated view of the responsibilities of those who hold the great offices of state.

What should alarm us is the extent to which this foggy vision at the top seems to have become a structural problem. In spite of many attempts to rewrite history, nobody at the apex of government appears to have anticipated the Covid pathogen becoming so dangerous and so disruptive as quickly as it did – in spite of the fact that a serious respiratory epidemic had been a line item on the “risk register” of every major nation for several years (“Sars++”). Nor does it seem to have made much difference that the UK had actually run more than one emergency planning exercise on the probable impact of such an outbreak on the NHS and other public services. The drills were conducted, but lessons, such as they were, were not implemented.

In fact, if you go further back, you could argue that poor forecasting has always been a feature of Brexiteer psychology. Though the Vote Leave campaign is now lionised as a model of disruptive politics, the truth is that its leaders did not expect to win the referendum and were preparing instead for a narrow defeat that would enable them to regroup as the Cameron government’s internal opposition, with Boris Johnson as chief dissident. For this reason (amongst others), nobody was remotely ready for the vexatious detail of Brexit negotiation – which helps to account for the constitutional and commercial mess of the past five years. Victory was never really part of the Vote Leave plan, so no-one prepared for it.

Even as we rightly expect better of our leaders, we also need to understand why even the best minds get caught out – and, in this respect, contemporary cognitive and behavioural science is, again, of immense use.

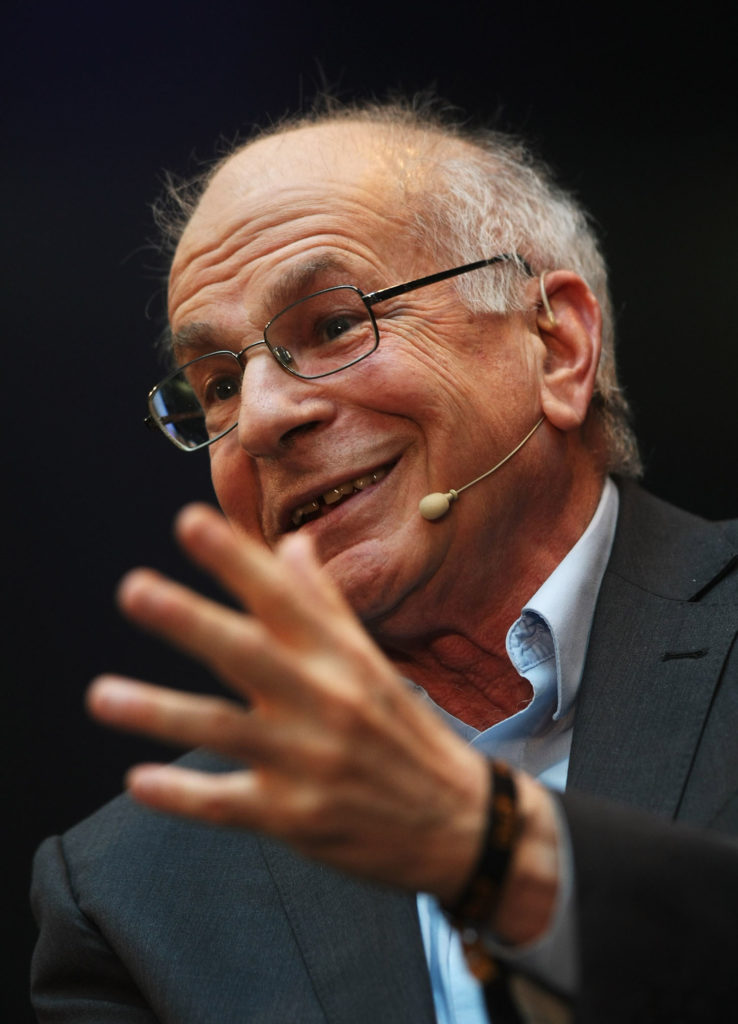

The Nobel Laureate Daniel Kahneman describes the mechanism of our minds as “lazy” – humans are to thinking as cats are to swimming. We can do it if we really have to, but it doesn’t come naturally.

Of course, we like to imagine that all our decisions are the consequence of orderly, rational thought. In practice, however, we tend to use shorthands (“heuristics”) to make mental activity less demanding. The flip side of this wondrous mental efficiency is that we are profoundly prone to systematic mental biases and errors: the benefit of a shorthand – clearly – is its efficiency; the danger, no less obviously, is that shorthands are approximations and blunt instruments.

Some of these biases are easy enough to spot: confirmation bias (first described by Peter Cathcart Wason) leads us to scan only for information and evidence that supports our previously held beliefs (“I literally didn’t see that coming”); status quo and normality biases (Kahneman and Dale T. Miller; William Samuelson and Richard Zeckhauser) lead us to ignore the possibility of a disaster that hasn’t happened before (“it really is so unlikely that the Americans would leave – and surely we’ve done enough to build an Afghan state that can stand up to the Taliban”); optimism bias (first described by Neil D. Weinstein, and more recently observed in British public sector decision making by the government’s own Behavioural Insights Team) is the excessive belief in the power of one’s own actions to solve problems (“our American friends would never just take their ball home – they’d talk to us first and I’m sure we could persuade them to stay a bit longer”); and of course, groupthink, the social thinking error that gave us the Challenger disaster (as elegantly described in Niall Ferguson’s recent book Doom) and to the Grenfell tragedy. Groupthink suppresses information that might undermine the settled world view or position of an institution, tribe or organisation: it reflexively discards and buries alarming possibilities.

So what can we do to prevent this kind of disaster in the future? How easily – if at all – can the necessary skills be acquired? Do we, as Dominic Cummings might put it, need to recruit to government an army of smart young “super-forecasters”? Can technology be deployed to help?

The answer is actually very simple. It’s something my colleagues and I have been helping all sorts of organisations to do for a number of years.

First, take a step away from the traditionally understood single timeline: in fact, where we stand today can lead to many possible futures rather than the particular outcome that we imagine to be most likely and most consistent with our experience or our preferences.

Rather than waste time and energy trying to predict The (singular) Future, it is far better to explore Multiple Possible Futures – and not just on a spectrum of Low-Medium-High as forecasters traditionally do. Explore a range of outcomes beyond the familiar, comforting and popular options towards which the organisation’s subconscious consensus reflexively steers, and take account of the many unlikely and frankly highly improbable possibilities.

If you struggle with imagining new scenarios of this sort, pause, look around and consider how extraordinarily broad the realm of the conceivable truly is. As the sci-fi writer William Gibson famously observed: “The future is already here, it’s just not very evenly distributed”. Indeed, you might ask yourself whether it is an accident that so many “multiverse” storylines are cropping up in contemporary culture – not least in the Marvel Cinematic Universe. The psychologist Carl Jung would have understood this outcrop of what he called the “collective unconscious”. (Consider: a decade ago, the TV industry was obsessed with cheap, formatted reality TV; instead, now we are enjoying a blossoming of long, scripted, twisty drama in which many forks appear in many plotlines.)

By all means, allot each such outcome a probability coefficient. But bear in mind that such a number will be subject to all of the biases mentioned above. And remember that such a ranking exercise – this is more likely than that – is less useful than thinking through the granular implications of each such outcome. This is not a game of pin the tail on the donkey; we are not trying to identify the indisputably correct answer, the inexorable future. You might conscript an army of maverick super-forecasters in the hope of achieving perfect predictions; but that’s not what’s needed here.

The little known work of neuroscientist David Ingvar and the renowned studies of scenario planner extraordinaire Arie de Geus makes it clear that some advantage lies in just having described a scenario – so that when it does come to pass, from left field, it has some measure of familiarity and can at least be recognised for what it is.

That alone puts you one step ahead of the game. But the really important strategic decision – reflecting an instinct that has to be baked into the culture of an organisation – is to build preparedness for a large number of outcomes (rather than just predicting them). Don’t predict, prepare.

This kind of resilience is the product of a readiness to ask very basic questions. What will you do if a particular thing happens? What skills will you need if X – however apparently unlikely – comes to pass? What do you need to stockpile, just in case Y rears its head? What sorts of expertise and know-how are we going to need in the event of a particular outcome, and how can we start to construct that capability now?

This is simple rather than easy. Simple in that it doesn’t involve an army of “super-forecasters” and statisticians, but not easy for most organisations because it involves complete systemic change, a willingness to think far beyond the horizon, and a readiness to voice anxieties that may – initially at least – seem ridiculous or paranoid.

Another prerequisite is the commitment to revisiting this thinking on a regular basis. It should be the core work of leaders and their strategic teams, not a separate work-stream or the subject of an annual offsite meeting (pleasant though they can be).

But the upside of this approach is huge, for governments as much as companies, civic groups or families. Imagine, again, the doors bursting open, Cardinals Ximenez, Biggles and Fang rushing in, all swivel-eyed and angry, and you telling them: “Funnily enough. I thought this might just happen, and here’s what we’re going to do”. It ruins the sketch – but saves the day.

Mark Earls is a writer who advises business on human behaviour and innovation. He is currently not writing his next book Memories of the Future.