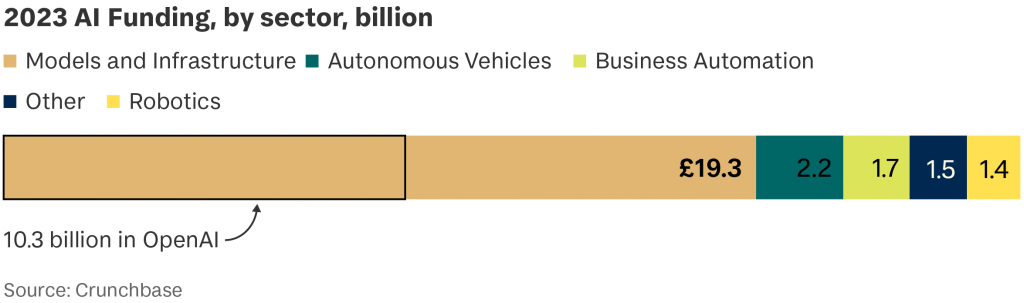

In the wake of the Bletchley Park summit, Tortoise research reveals that $53 billion in private equity funding has gone to AI companies in 2023.

Of that, $16 billion has gone to 15 companies, based mostly in the US and China, which specialise in building large general-purpose “foundation” AI models – the basis for generative AI. These model-building companies are the “shovel sellers” of the AI gold rush.

So what? Companies that buy “shovels” from AI firms are at the mercy of decisions made by those firms on how AI safety and governance is implemented. With technological advancement running ahead of regulation, the AI shovel sellers have been left largely to mark their own homework.

Until now… The Bletchley Declaration, committing 29 governments to international collaboration on the development of responsible AI, has been heralded as a coup for Rishi Sunak.

More concrete, and arguably more significant, was a second statement jointly backed by a smaller group of western nations and AI industry giants, committing them to government-lead safety testing on private AI models. It’s welcome. So far, private-led approaches have been:

- Half-hearted: Stanford University’s Foundation Model Transparency Index found that companies in this sector are actually becoming less transparent. The highest score for risk disclosure among ten companies was 57 out of 100, a number the researchers say is “not worth crowing about”.

- Lacking benchmarks. Anthropic, backed by Google, touts its “Constitutional AI” approach, with human principles “baked in” to the model from outset. OpenAI publishes research on mitigating AI misuse. At the other end of the spectrum, Mistral AI, a French startup, released an open-source and largely unmoderated model in September, which researchers found could be easily prompted to generate harmful content, including instructions on bomb-making.

- Silicon Valley-esque. The old logic of “move fast, break things” persists among AI companies, building products and setting their own testing standards to suit. Instead what’s required is an equivalent of the FDA; a regulatory body that ensures only safe products make it to market.

At Bletchley, several of the biggest players signalled they are taking safety and governance more seriously. But companies further down the chain of adoption still need to be alive to threats including;

- Risk. Traditional lines of defence – lawyers and data governance committees – won’t suffice. AI risks aren’t easily quantifiable; they arise when tools like Chat-GPT are tested with prompts. “The problem is we don’t know how to evaluate risk properly with these [AI] systems,” says Yoshua Bengio, a member of the UN’s Scientific Advisory Board.

- Dependency. 68 per cent of large companies in the UK adopted at least one AI technology in 2022. Foundation model-makers like OpenAI are seeking a larger slice of that market by offering to build custom models for companies with large proprietary datasets (pricing starts around $2 million). In light of this deep concentration of money and expertise, Tim O’Reilly of UCL and the economist Mariana Mazzucato have warned against the rise of “Algorithmic Attention Rents”. The risk is that large AI companies could monopolise this market – and our attention.

We’ve been here before. “The internet, in its infrastructure and design, was meant to be open and non-commercial,” says Marietje Schaake, international policy fellow at Stanford’s Institute for Human-Centered Artificial Intelligence. “The way AI models are developed now are through high concentrations of capital, power and talent in the hands of a few. So this is a very different dynamic. The starting point of where we are now is much less open and accessible.”

We’re only at the start of this goldrush and, to mix metaphors, the infancy of AI. Ensuring its transition into responsible adulthood is going to require a holistic safety-first approach. That means that all companies need to make themselves aware – not just of existential threats, but economic ones too.